The Water Robotics Story

Teja Vinukollu is building a physical AI model (currently 70B-parameter) that reads the human body through pressure data — and moves the surfaces your body touches. This is the origin story.

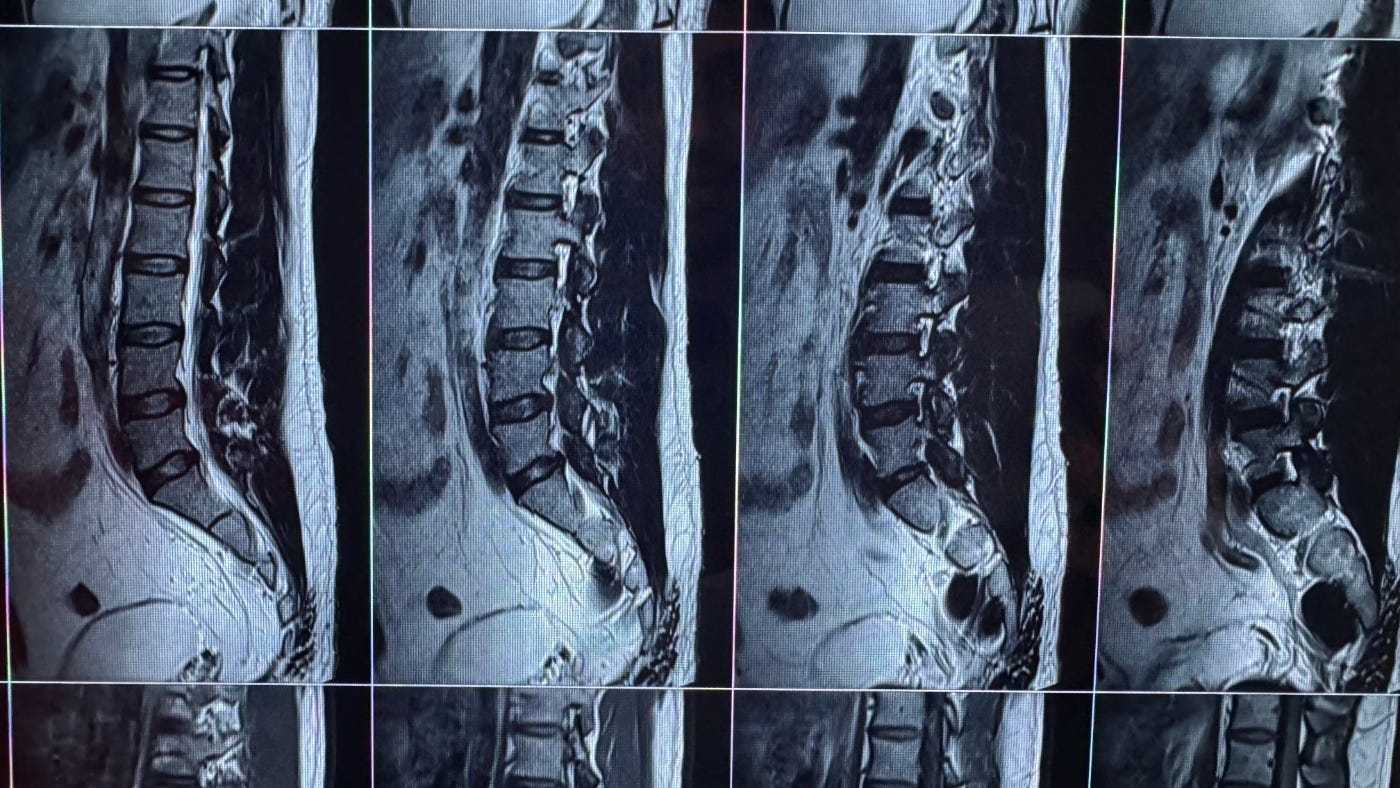

“During the remote work era, I was working from home, sitting on my bed, typing for twelve to fourteen hours a day. My back deteriorated. The pain became chronic. MRI scans showed spinal compression. I began to wonder why is my bed not like a Tesla.”

“Elon Musk has technically built a robot that has four wheels. A Tesla car is a robot. It has sensors. It has motors. It can see. It is a computer that can make decisions.”

“I started wondering — why can’t other consumer products be robots too?”

AI has gotten good at two of the five human senses.

Language models process text. Vision models process images. Both work because the training data exists on the internet in enormous quantities. You can scrape it.

Touch is different.

Kinetic data, biomechanical data, and pressure data generated by real human bodies on real physical surfaces — this data barely exists as a dataset. You cannot scrape it. You have to build hardware, put human bodies on it, and collect it night after night, day after day.

Water Robotics builds AI models for touch — six on-device models today, a 70B-parameter cloud model that augments them, one foundation model for the body tomorrow — and builds the hardware around them: furniture that learns and changes shape as per your body posture.

A family of interconnected models — a sequential pipeline where each model’s output feeds the next — that let the physical surface understand the living body on top of it. The models operate on pressure data gathered from their own hardware products.

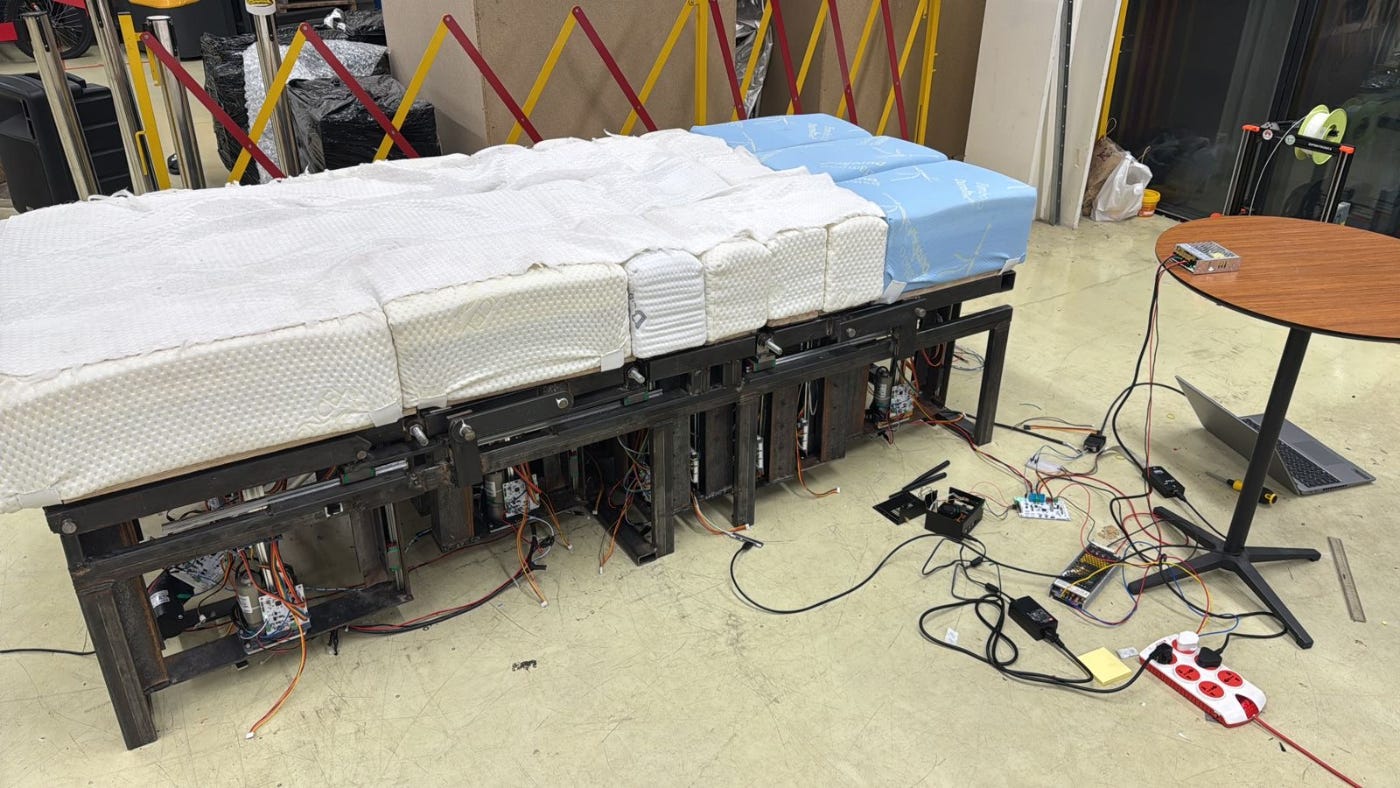

The hardware is being built in two forms today — a chair and a bed.

The bed has 10,752 pressure sensors and fifty motors that adjust in sync with your breath, millimetre by millimetre. You sleep on it for eight hours, unconscious, generating uninterrupted biomechanical data every night — the ideal training environment for the RL engine.

The chair has 1,048 pressure points and a simpler mechanical architecture, but runs the same models. It collects data while you work, sit, read, and move through your day.

The bed knows your body when you are unconscious. The chair knows it when you are conscious. Together, they build a twenty-four-hour picture. And the models get sharper with every hour — the more data it learns from, the more precise the adjustments, the deeper the switching cost.

“Our company creates kinetic motion data of humans — primarily biomechanics data from human body movement on surfaces.”

“Thinking about it, this will be like an Apple ecosystem — this family of AI models will be similar to how iOS for Apple.”

Water Robotics, after it goes to market, will learn from data of human bodies at scale.

Every bed they ship, every chair they deploy, every night a user sleeps or sits on them — the dataset grows. This is not data you can scrape from the internet. This is data you can only collect from real human bodies on real physical surfaces.

Their population model is already trained on over three million labelled biomechanical samples. Six on-device models on the bed handle sensing, identification, posture, keypoints, and actuation. The bed works without internet. When it is online, data flows up to a 70B-parameter cloud model that reasons across every deployed bed and sends decisions back. Learning happens both ways. Each deployed unit generates continuous new training data. No competitor can build this dataset without shipping the hardware at the same scale.

The Water Family — six models on-device today, a 70B-parameter cloud model, trained on proprietary data, running on proprietary hardware — has no dependency on OpenAI, Anthropic, or Google. The intelligence is native. The bed works whether the internet is up or not.

The pipeline is a scaffold. Language models started this way — tokenisers, parsers, intent classifiers, generators, all separate — until transformers collapsed them into one. Vision went the same way. Water Robotics is running the same play for touch. Every bed deployed feeds the 70B more data. The six models start folding into it. The end state is a single foundation model for touch — one model that knows what a human body is, what it is doing, and what the surface beneath it should do in response.

breath

This is not abstract risk. Teja lived it at Zapr — platform pulled the rug, company died. It is the same trapdoor under every AI startup built on rented intelligence today. Water Robotics owns its models, owns its data, owns its hardware. There is no rug to pull.

Water Robotics wants to own the full stack.

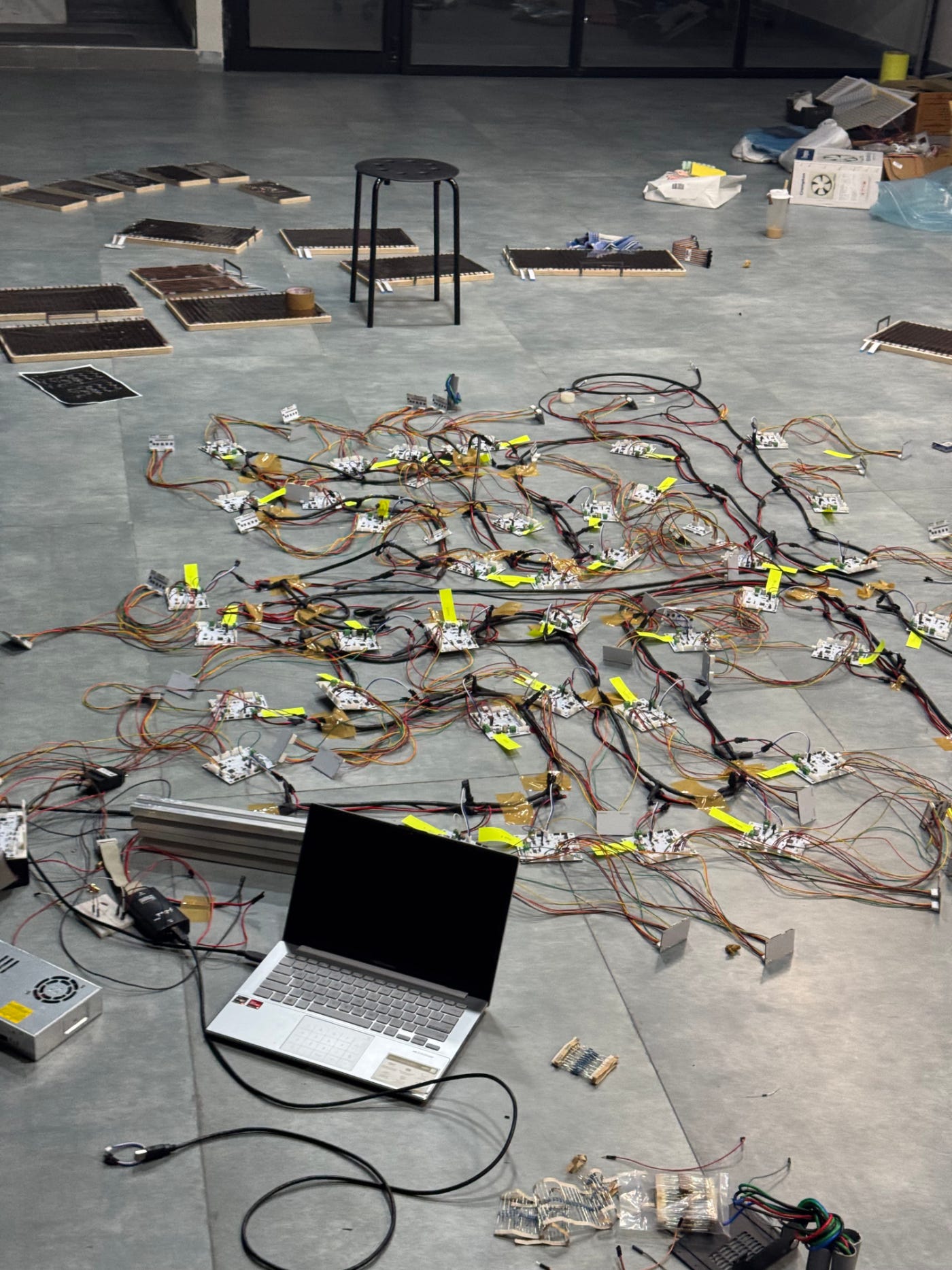

This is the foundation of everything Water Robotics is. Intro video-clip to the bed:

A Montessori in Andhra Pradesh

Teja was born in a government hospital in a small town called Ongole in Andhra Pradesh.

On the bed right next to his mother was a Muslim couple. Their baby was born minutes apart from Teja. A relative came to the ward, picked up the wrong baby — the fair-skinned one — and started celebrating. Then someone pointed to the other bed. That is your baby.

This story stayed with Teja. Not the colour prejudice — that part is ordinary. What struck him was the randomness. He could have been born in the next bed. He had no control over it.

And taking pride in something over which he had no control felt, to him, utterly irrational.

“I can never be proud of something I have no control over. I will be proud of the work I have done. But I can never be proud of something that was pure lottery.”

So he went looking for things that transcend the lottery.

Things that work the same regardless of which bed you were born in, which side of the border, or which colour of skin.

He found math, physics, chemistry — first principles that do not change with people.

“Some people call it first principles. I call it math, physics, chemistry. It doesn’t matter which colour your skin is. Math works equally for both of us.”

He was the class topper. First rank, every year.

"I don't recollect studying hard, to be very honest. But it's probably my parents trying to push me."

The Montessori approach shaped something in him.

He did not learn by memorising. He learned by absorbing systems — how things connect to other things, how patterns repeat across domains, how a single principle can explain seemingly unrelated phenomena.

"My father was a teacher. He made me study. But the Montessori method — it doesn't teach you to memorise. It teaches you to see how things connect. That shaped everything about how I think."

This would matter later. The ability to look at a Tesla and think "that is a robot with four wheels" and then ask "why can't a bed be a robot too" — that is systems thinking applied to the physical world. It started here.

The catalyst

In the eighth standard, Teja met a maths teacher named Raju Sir.

Raju Sir was lighthearted. He cracked jokes. He did not take mathematics seriously — or rather, he took it seriously enough to make it feel like play. Something clicked. Teja opened the first chapter of the eighth standard textbook and solved a problem. Then the next. Then the entire book.

"From one chapter, I went to all the chapters. Then I went to ninth standard, then tenth, then intermediate. In one year, I finished everything."

By the time he sat for the AIEEE — one of India's most competitive engineering entrance examinations — his ranking made the local Telugu newspaper.

He scored an All India Rank 6. In Ongole, that meant the whole town knew.

What matters about this period is not the rankings. It is what they reveal: a mind that, once ignited, does not stop at the boundary of the syllabus. He did not study maths because it was assigned. He studied it because it was there.

BITS Pilani

BITS Pilani changed everything.

At BITS Pilani, he was distracted enough to see his GPAs crater. The academic identity that had defined him for fifteen years stopped mattering.

"Everyone was scared to talk to me because I used to be arrogant. And they eventually stopped talking. That lack of human connection was very painful."

With his grades gone and his identity in pieces, Teja did something unexpected.

He started paying attention to people. He studied psychology — Carl Jung, cognitive functions, and why the same person behaves differently in different situations. He developed what he calls an emotional antenna.

"I started appreciating human emotions. Why do people do stuff. How does the same person behave differently in different scenarios. I got into psychology, Carl Jung, Cognitive Functions, all of it."

The hospital birth story, the question of lottery, the search for first principles — all of it crystallised at BITS. He stopped caring about derived identities. He started looking for what unifies rather than what divides.

"I started thinking, what is it that unifies all of us? It's fundamentally the things that don't change with people. Some people call it first principles. I call it math, physics, chemistry."

He left BITS with something unexpected: an interest in human psychology that most engineers never develop, and a way of thinking built on first principles rather than convention.

First few jobs

After BITS, Teja moved through seven companies over roughly a decade. Each one taught him something. Together, they taught him everything he needed to build Water Robotics.

His first job was at Verizon in India. He lasted a few months. The work was not bad. It was empty. He describes it as using one-millionth of his capacity. He left.

He moved to Kony Labs in Hyderabad, a product company building enterprise mobile platforms. It was better — real product work, real engineering — but still not what he was looking for.

Teja left Kony in 2013 and went to the University of Washington for an MBA.

UW’s business school is not just a business school the way Indian business schools are business schools. It is a research university with marine biology and civil engineering and a dozen kinds of physics under one roof.

The MBA was in the morning — one hour a day, alternate days — which left most of the day free. He filled the evenings by joining two more programmes at the same university. A screenwriting certificate at UW. And a certificate in User Centered Design from the Department of Human Centered Design & Engineering.

HCDE is the one that mattered.

“Before 2013, at Kony, I used to design systems. I felt the design was right because I solved my problem. But something wasn’t feeling right. There was a gap I couldn’t name.”

HCDE was that gap. The course was about designing for a person who is not you — how to observe them, what questions to ask, how to separate what they say from what they do. He took it every evening after his MBA class.

“At every company after Kony, I worked on experiences. Customer experience at Wooqer. Mobile experience at ZAPR. Surface experiences at Microsoft. Multiplier was experiences again. It was all the same skill.”

Two other experiences from those years influenced Teja.

The first was a Sony VAIO Duo 13 laptop, and carpal tunnel syndrome

He bought it in 2013. It was a premium machine, priced like a MacBook Pro. But the design was wrong. Long video editing sessions and coding on a cramped trackpad gave him carpal tunnel attacks that lasted roughly two weeks each. He did not connect the cause to the machine until early 2014.

The fix was a MacBook Pro. He switched, and the attacks never came back. It was the first time he paid attention to the surface where his body met a machine. A badly designed surface caused weeks of pain. A well-designed one erased it. That realisation is what pushed him into the UCD certificate at HCDE.

The second was hydroponics. A classmate, James Fenske, had an idea for a small home machine that could grow plants indoors, controlled from a smartphone. Seattle had just legalised recreational cannabis, and James wanted to build the box for that, but the principle was general: deliver the right nutrients in the right quantities — calibrated to the milligram per litre — with the right light angle, and a plant will grow anywhere. Teja built it with him as an HCDE project in 2014.

Then Wooqer, pitched as the Indian Salesforce for retail. Eight months. He ran everything: product, mobile, and engineering. It was chaotic, underfunded, and taught him a lot about building products from scratch.

Ola

Then Ola. In 2015, Ola was the place to be.

“That was the rage. Ola used to be everything in 2015.”

Teja joined the product team and learned something that would shape how he thinks about every product he would ever build: data tells stories if you know how to read it.

The problem was deceptively simple — out of 100 people who open the Ola app, how many end up booking a cab? When Teja joined, the number was around 75%. His team moved it to 84-85%.

Two interventions stood out. First, he introduced upfront pricing on the booking screen. Previously, a rider might see a range — Rs 100 to Rs 180 — with no certainty about the final fare. Teja’s team pinned it. This is the price. This is what you will pay. Conversions jumped.

Second, Teja noticed something about the map. When a user opened the app and saw an empty map — no cabs nearby — they would not even try to book. But Teja knew that if they pressed next and confirmed a booking anyway, there was a 20% chance a cab would be assigned. The empty map was killing bookings that would have worked. So they started showing cab availability indicators — dots, movement, visual signals of life.

“If the map is empty, people don’t click. But excessively showing availability also has downside, which was breaking trust with the platform. Balancing it was the art.”

He suggested dark mode for the app in 2016, years before Apple introduced it in iOS. His reasoning was practical: most rides happen at night. An LED display uses energy to light every non-black pixel. A dark interface on an OLED screen saves battery. And for a user sitting in the back seat of a cab at 11 pm, a bright white screen is a flashlight in their face.

“Back then in 2016, I asked these people to use dark mode. A customer’s battery is dying. It’s an LED display. If it uses black pixels, it doesn’t need to light up. The battery will last longer. And also, it’s less strain.”

The deepest lesson from Ola was about people. The company operated at an enormous scale — hundreds of thousands of drivers, millions of rides, data flowing through everything.

Ola’s customer base and Uber’s customer base were different populations. In 2015 and 2016, software engineers and white-collar professionals used Uber. Government employees, traders, and the broader middle class used Ola. The same city, the same roads, the same basic service — but the product’s personality attracted different people.

Product is identity. He filed this away in his mind.

“The company had crazy high IQ people. But I guess we as a company were lacking in the EQ department.”

He watched attrition hollow out institutional knowledge.

Good people left. The people who replaced them had the data but not the context. Products that should have been compounded over the years stalled because the team that understood them turned over.

It was his first real look at how organisational culture directly affects the quality of a technology product.

Zapr

Then Teja moved to Zapr.

Zapr had built a tiny SDK embedded on Android phones. Every four minutes, the SDK would wake the phone, record a seven-second snippet of ambient audio, fingerprint it against a database, and determine what the user was watching or listening to — without storing the audio itself. Samsung was a customer. The technology was real.

“This is how the apps listen to us.”

Teja watched the company raise money, watched it grow, watched the product work — and then watched Google kill the background audio recording API on Android.

“What it did was when you try to record in the background, it will show that the microphone is being accessed. That made people suspicious about what’s happening in their phones, and thus Zapr’s moat started declining.”

Overnight, Zapr’s entire technical foundation disappeared. A company with real customers, real revenue, and real technology — gone, because it was built on someone else’s platform.

The lesson stayed with him: if your technology depends on a platform you do not control, you are fragile. If you need someone else’s model in the middle — an LLM, an API, a third-party service — you are a wrapper. And a wrapper’s terminal value is fundamentally different from a company that owns its own intelligence.

Own your hardware, own your models, and you are not dependent on anyone.

Microsoft Surface Hub

Then Teja arrived at Microsoft. He had wanted to join Microsoft for a specific (weird) reason: Windows Phone. He knew how bad the situation was. But he wanted to make it the best phone in the world.

By the time he joined in 2018, Microsoft had had enough.

“I wanted to join Microsoft to take the Windows Phone from where it was to the best phone in the world.”

“But by the time I joined, they killed it.”

He started on a regular team. Then he was moved to the Surface Hub group — Microsoft’s 55-inch and 84-inch touchscreen whiteboard displays, designed for meeting rooms.

These were Rs 15-20 lakh devices. Niche hardware, small team, high expectations.

“I was heading the product management for Surface Hub. I just wanted to make the best device ever. Like, every device that’s created in the world should be used by people and should be great.”

He spent three years thinking about how physical surfaces interact with human beings. How a screen should respond to touch. How a conference room device should understand who is in the room, what they need, and how to make the meeting better without anyone pressing a button.

Panos Panay — who led Microsoft’s hardware division — became an inspiration. Not because of any specific product, but because of the craft. The obsessive attention to how an object feels in your hands, how the hinge moves, what the screen does when you touch it.

And then there was Steven Bathiche. Bathiche led the Applied Sciences group at Microsoft — the research lab behind the original Surface Table.

The original vision was not “a table with a screen.” The original vision was: every surface should know who you are. You put your phone on the table, and the table knows what the phone contains and can interact with it. You rest your hands on it, and the table reads the objects near your fingers. The surface understands the things placed upon it.

That phrase — “every surface should know who you are” — lodged in Teja’s mind. It would not leave. It would become the founding thesis of Water Robotics.

At CES 2026, years later, Bathiche would visit the Water Robotics booth and spend over an hour on the bed. The student had built what the teacher had imagined.

But Microsoft also taught Teja something harder: patience has limits. The pace of a large company — reviews, approvals, consensus, roadmaps — was crushing for someone who wanted to build and ship.

He left after three years, grateful for what he learned but certain he could not build what he wanted to build inside a large organisation.

Multiplier

After Microsoft, Teja joined Sequoia India-backed Multiplier — a Singapore-based company building HR and payroll infrastructure for distributed teams.

He joined as one of the first product people. The product team was one person. He helped build it to eighteen.

He worked directly with the founder and learned things BITS and corporate India never taught him: how fundraising works, how equity gets structured, how a company’s cap table shapes its future. Revenue grew from $200K to $46 million during his time there. He watched a startup scale from the inside — not as an engineer, but as the person responsible for what gets built and why.

There was a payslip bug — a calculation error that affected a small number of employees across multiple countries, each with different tax codes and labour laws. Teja used GPT and Claude to cross-reference the tax logic, find the discrepancy, and patch it.

Not because AI was the right tool for payroll — it was not — but because the bug sat at the intersection of multiple regulatory systems, and the language models were useful as cross-referencing engines.

He saw the shape of what AI could do as an assistant for complex, multi-domain problems.

But Multiplier was someone else’s company.

Water Robots

It was during the remote work era. Teja was working from home, sitting on his bed, typing for twelve to fourteen hours a day. His back deteriorated. The pain became chronic.

It was around this time that Teja started thinking about his first experience of driving a Tesla.

The whole time, the phrase kept echoing: every surface should know who you are. His back still hurts from the years of working on beds and bad chairs. The idea was fully formed.

Teja left Multiplier to build it.

The signal was loud and clear.

He fused three ideas: Tesla’s architecture — sensors, actuators, and autonomous decision-making. Microsoft Surface’s original vision — every physical surface understands its user. And the agent framework from B2B software — autonomous systems that act on your behalf.

“Agents for the physical world. That was my seed thought.”

The Water Family of AI models

This is the model stack introduced earlier. Here is how each layer works.

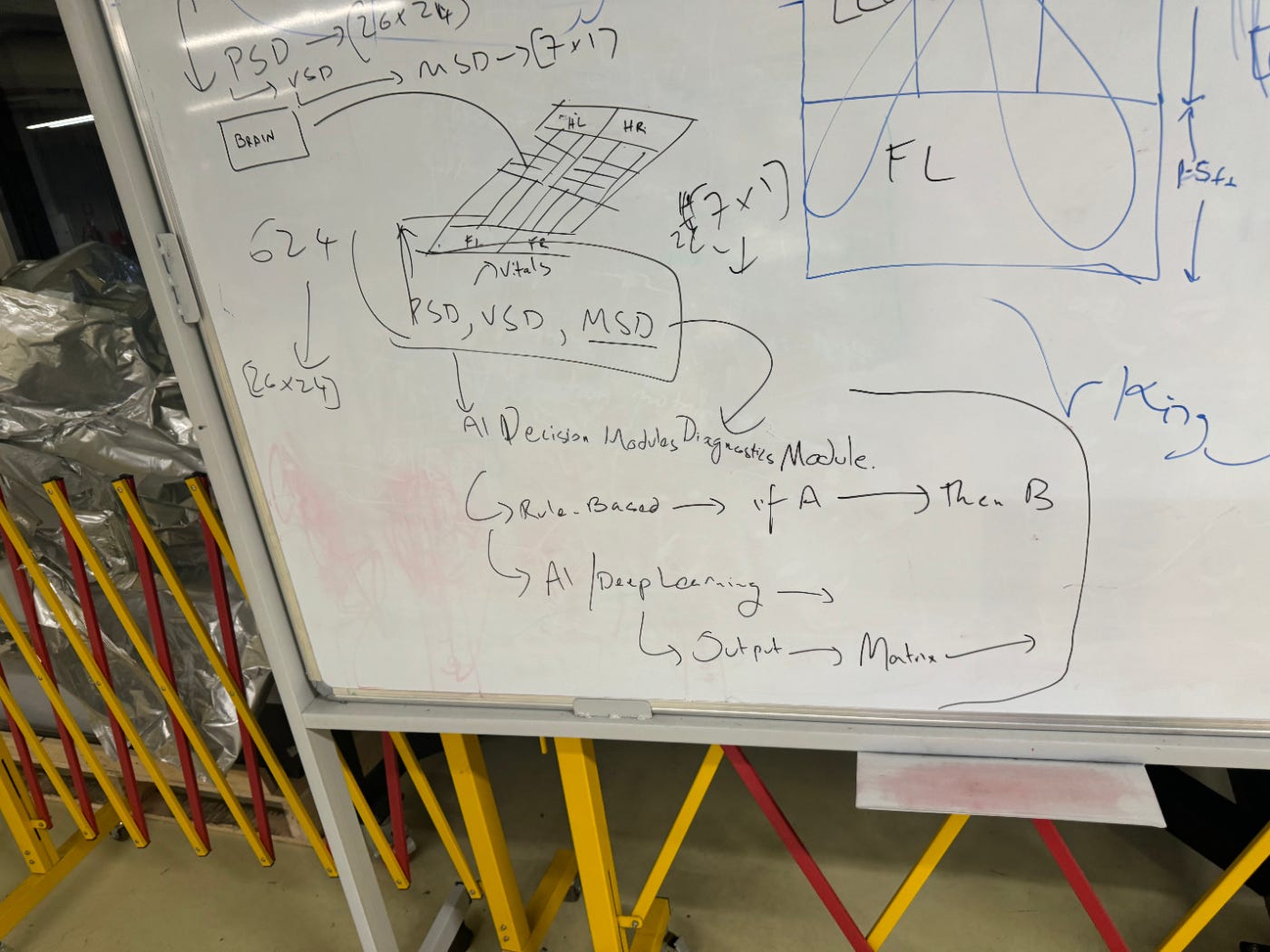

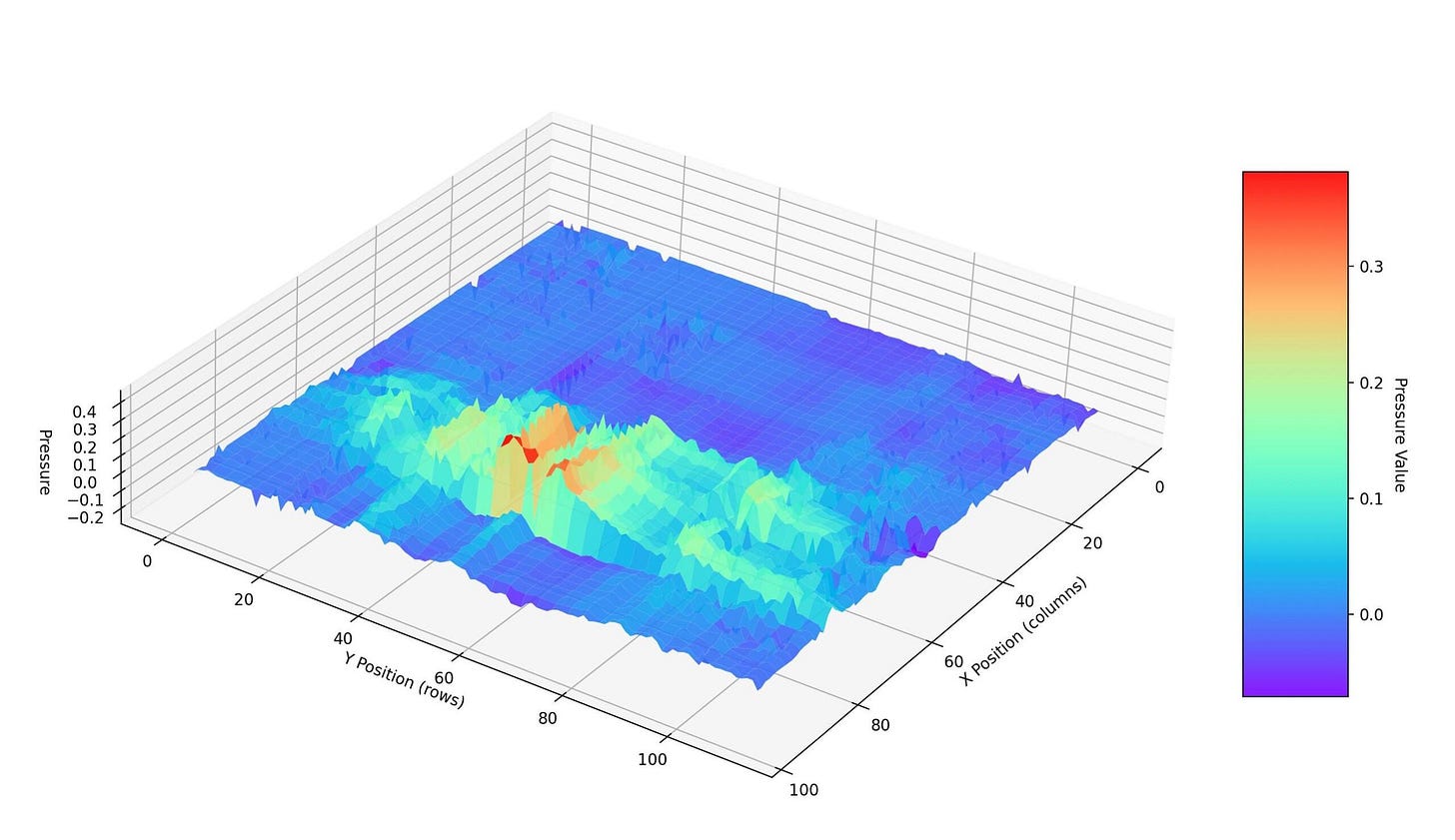

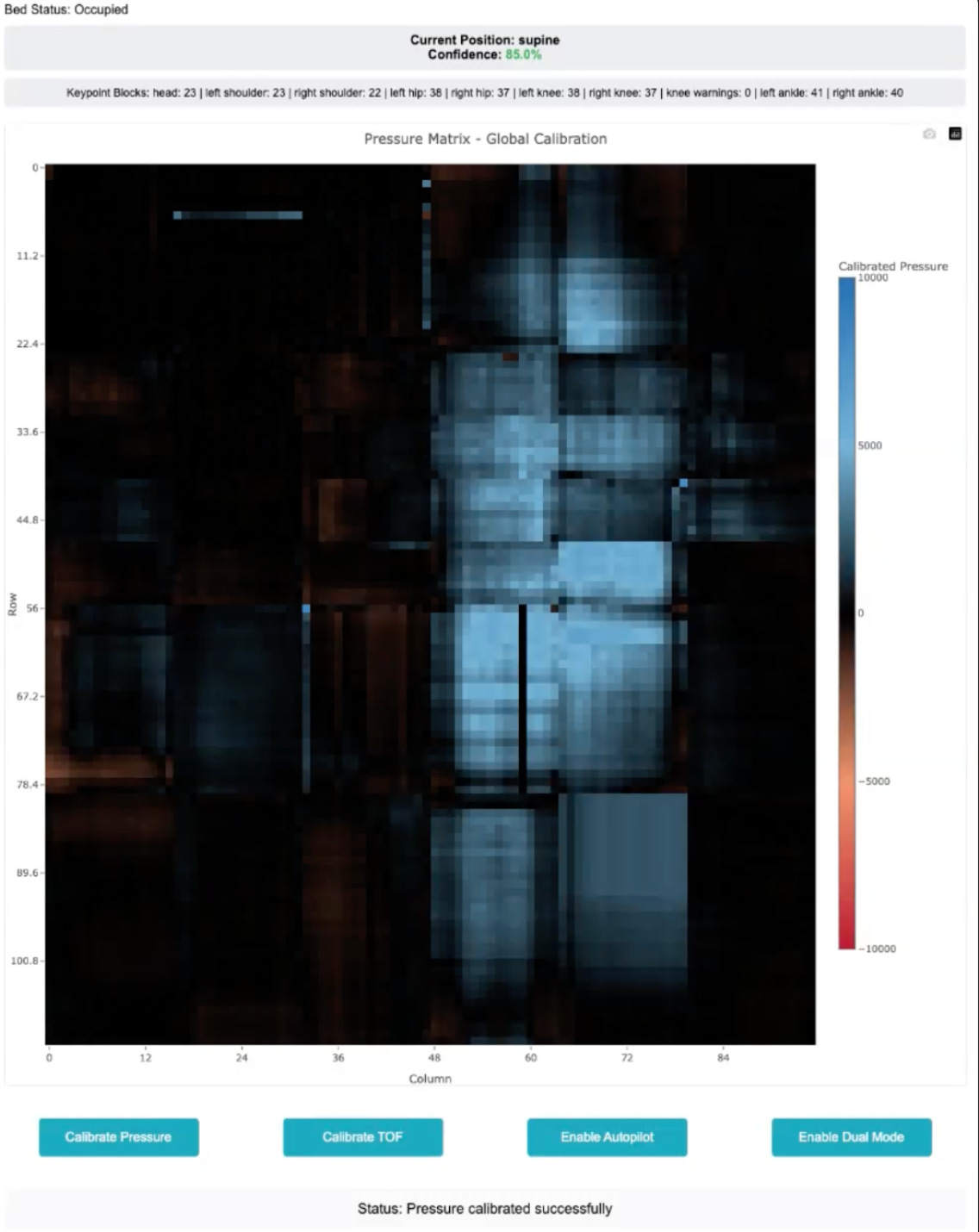

The Water AI Models pipeline has six layers. Each one’s output feeds the next. The six on-device models run locally on the bed; the 70B-parameter model runs in the cloud and augments them.

The first model is Pressure ID. The surface must know who is on it before it can do anything.

When you buy the bed, you lie on it in four standard postures — supine, prone, left side, right side — for about thirty seconds each.

This creates a biometric pressure signature unique to you — a function of your weight distribution, your skeletal geometry, and your soft tissue composition.

Next time you lie down, the bed knows it is you. No buttons, no voice commands, no app. You simply lie down, and the surface recognises your body.

“When you buy the bed, we ask you to lie down in four postures for thirty seconds each. That’s it. From that moment on, the bed knows you. You lie down, it loads your settings. If it doesn’t recognise you, it won’t move. It just stays neutral.”

On a shared bed, Pressure ID first detects how many occupants are present, then draws a boundary segmentation between them, and then matches each body against stored profiles. If it encounters an unrecognised user, it defaults to neutral mode. It will not actuate.

The second model is Posture Classification. Once the bed knows who you are, it needs to know what position you are in.

This is an ensemble — two complementary approaches running in parallel. The first is a per-user model trained on your onboarding data. The system knows exactly what your specific body looks like in each posture. The second is a population model trained on over three million labelled pressure samples across a diverse range of body types.

Today, the system reliably classifies six postures: supine, prone, left side, right side, left fetal, right fetal.

The roadmap includes dozens of non-standard sleep positions through continued data collection.

"We are trying to identify how do we account for all those postures and then feed them into the system. That is the future of this specific model."

The third model is Keypoint Detection. Once the system knows your posture, it maps your anatomy — locating your head, shoulders, elbows, spine, pelvis, knees, ankles, and feet on the pressure surface.

The primary method uses vision models adapted for pressure heatmaps rather than RGB images. This gets to about ninety percent accuracy.

Then they layer a biomechanical constraint model on top: if the system knows your height and has stored your body-segment ratios — femur length, torso length, arm length — it can validate and correct the vision model's predictions using the physics of the human skeleton.

“We started putting them on, superimposing on top of it, and then we got to around 98-99 percent accuracy.”

The combined system is quite accurate.

“At 98 percent keypoint accuracy, we can target individual vertebral segments. That is the resolution you need for therapeutic-grade spinal support. Not 'your lower back.' Your L4 vertebra, precisely.”

The fourth model is Pressure Relief. This is the first model in the stack that actually moves the bed.

It operates as a real-time optimisation loop. Given the current pressure distribution and the anatomical keypoint map, it computes the minimum set of actuator movements needed to bring every body region within clinically safe pressure thresholds.

This is particularly critical for side sleepers and pregnant customers, who concentrate an enormous load on the shoulder and hip.

The system does not just make you comfortable. It actively redistributes pressure away from danger zones.

"The pressure relief model is constantly trying to decrease the pressure on any given body part. That is one thing. It never stops optimising."

The fifth model is the Reinforcement Learning engine. This is where the defensibility starts to compound.

When the bed moves, an actuator, it monitors your heart rate and respiratory rate in the seconds that follow.

If either spikes, the system interprets this as a disturbance and penalises that action. Over time, the RL agent learns a policy that minimises physiological disruption.

Sleep stages of the user mapped with vitals and pressure:

When a movement is penalised, the system has three fallback strategies: find alternative actuators that achieve the same postural goal, reduce the speed of the movement, or reduce the magnitude.

The RL agent learns which strategy works best for you specifically. This is a per-user model that improves every single night.

After thirty days, the system has accumulated enough reward signals to make near-zero-disturbance adjustments.

After ninety days, the system has built a detailed model of your specific biomechanics — the kind of data that would take a clinician many sessions to gather manually.

"The next time when we have to move that specific block at the specific speed, it will try to find other possible parts to get the same task done, or it decreases the speed, or it will decrease the height."

Your RL policy is unique. It cannot be replicated without your specific physiological history. Every night you sleep on it, the switching cost deepens.

The sixth model — the next frontier — is Contextual Intelligence.

Everything described above operates when you are unconscious. The bed manages your sleeping body. But when you wake up, it becomes dumb. You have to press buttons. Give voice commands. Tell it what you want.

Contextual Intelligence changes this.

It infers what you are doing from a combination of pressure-sensor patterns, signals from connected devices, and temporal patterns.

An early implementation already exists: when you activate an Apple TV profile, the bed automatically reclines to a viewing position. When playback stops, and your posture shifts toward sleep, the bed transitions to optimal sleep configuration.

No buttons, no commands. The surface understands.

"These things are already happening today. But more and more — when you are conscious or when you are awake — how do we do that in various scenarios? You are working from a bed. You are having intimacy. All of these things, today you need to ask the bed. We want more and more contextual intelligence so that the bed automatically does all of these things."

Now step back and look at the full stack. Six models. Each one feeds the next. Each one compounds the value of every model below it.

The first three — occupancy, identification, posture — execute in under two hundred milliseconds on-device. Keypoint detection and actuation decisions run continuously at low latency, without cloud dependency.

This is on-device intelligence. The bed thinks locally. The 70B-parameter cloud model sits above it, reasoning across every bed in the fleet and pushing back patterns no single bed could see on its own.

"The first three stages — who is on the bed, how many people, what posture — all of that happens in under 200 milliseconds. On-device. No cloud. The bed has to think for itself."

And every model in this stack is surface-agnostic. Pressure ID does not care whether the pressure data comes from a bed, a chair, or a sofa. Posture Classification works the same way. Keypoint Detection works the same way.

The R&D investment in the bed's model stack has near-zero marginal cost when applied to new product categories.

“The end goal of all of this — all the furniture, all the objects, the non-living things in the house — are subscribed to this consciousness.”

“One consciousness that helps all of them help better human experiences and longevity. Today, if you talk about Internet of Things, all of them are technically connected. But none of them are intelligent.”

The existing Internet of Things connects devices. None of them understands the human body.

Water Robotics is building a home-scale biomechanical intelligence layer — where every surface you touch is subscribed to a single consciousness that knows your body.

Six models, one idle bed

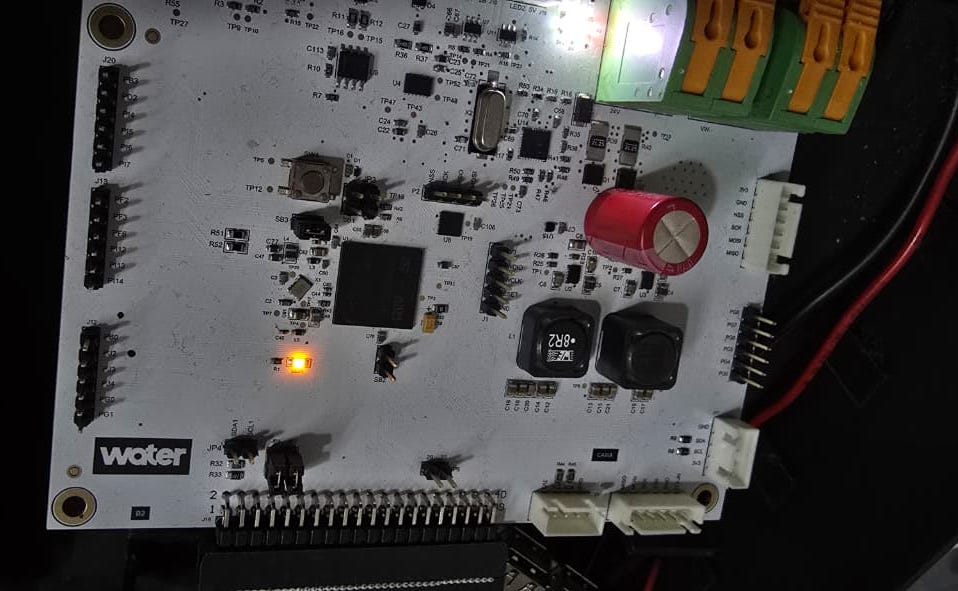

The bed runs six machine-learning models on a Raspberry Pi 5. Roughly a ten-thousand-rupee board doing what an NVIDIA Jetson at forty-two thousand was doing in the previous generation, with no loss of effectiveness. Above the Pi, in the cloud, sits a 70B-parameter model — too large to run on the bed — augmenting the six with cross-fleet reasoning.

It works because of one observation Teja’s team built the whole compute stack around: a bed is idle about sixteen hours a day. Training is spread across those idle hours instead of demanded in a burst.

“Cost optimisation was the biggest thing.”

Personal pressure data never leaves the bed. Population-scale models are trained in the cloud only on anonymised, PII-stripped data.

Posture recognition uses a dual-path fusion architecture. The raw pressure matrix is copied twice. One copy goes through a CNN ending in a dense layer, producing a latent vector. The other runs through a set of hand-designed feature extractors — left-right symmetry, contact discontinuities along the spine line, spatial distribution of pressure — and then through a separate dense layer. The two vectors are fused and fed into a final classifier. Two different views of the same pressure field, fused for robustness.

Keypoint extraction takes the classified posture and the pressure matrix through a CNN and dense layers to output joint coordinates — head, shoulders, hips, knees, heels. The loss function is not pixel regression. It encodes biomechanical constraints: limb lengths, body segment ratios. The network learns human anatomy as a prior rather than treating every frame as a fresh vision problem.

Sleep stage prediction is a recurrent network — a GRU — running on heart rate and respiratory rate. The bed does not need a chest strap to read heart rate; it reads it from the pressure pulse of the torso on the mattress. Each five-minute window is compressed into a small feature vector — approximate RMSSD, SDNN, heart rate mean, respiration rate mean — and a two-layer GRU predicts sleep stage at per-minute resolution.

Three jobs run on the Raspberry Pi inside the bed. Real-time inference for all six models. Training the Pressure ID classifier that runs once, in the first week after a bed is delivered — this is how the bed learns the specific person who now owns it. And fine-tuning the posture model to the new user’s body shape.

Two things run in the cloud. The 70B-parameter Water Family model, reasoning across every deployed bed and chair, feeding higher-order signals back to the six on-device models. And one training job: a pressure-matrix reconstruction autoencoder. It compresses a full-bed pressure matrix to a latent vector and reconstructs it back. Trained on crowd-scale data, the encoder becomes a powerful feature extractor. Attach a small classification head to the latent space and you get a posture model that benefits from population-scale variety without any individual’s raw pressure data ever leaving their bed. Only gradients and anonymised aggregates move.

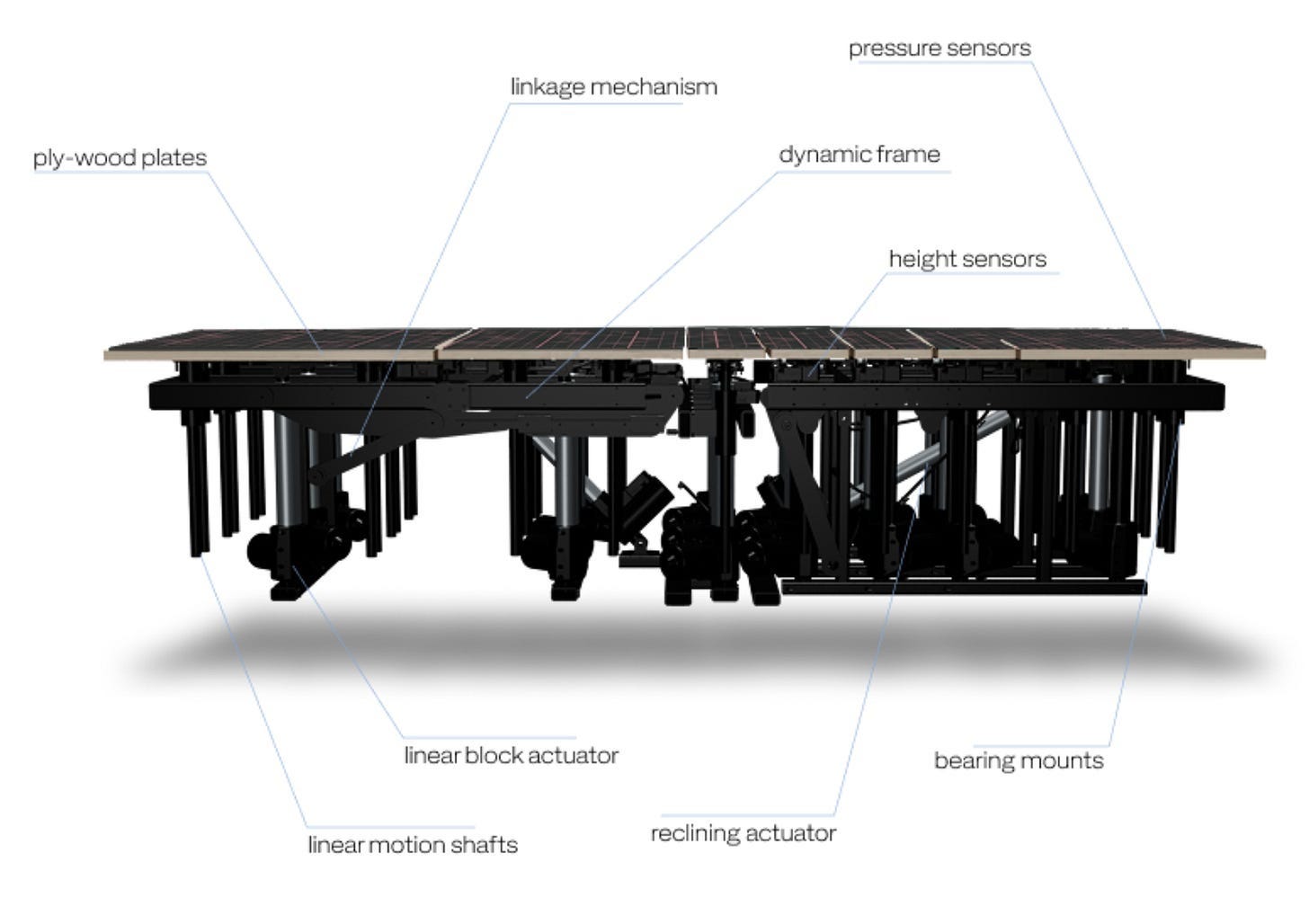

The hardware carries the same metaphor.

“I call all of them brain, spine, arm, skin. The architecture is inspired from the human body. There is no deeper reason than that. You copy what works.”

The Bed

The name Water Robotics comes from Bruce Lee.

“Be Water, My Friend.

Empty your mind.

Be formless, shapeless, like water.

You put water into a cup, it becomes the cup.

You put water into a bottle, it becomes the bottle.

You put it into a teapot, it becomes the teapot.

Now water can flow or it can crash.

Be water, my friend.”

The bed is where the intelligence runs first.

Why a bed? You spend eight hours a day on it, unconscious.

The RL engine needs long, uninterrupted sessions — hours where the body is still enough for the models to observe, adjust, and learn from the physiological response. Sleep is the ideal training environment.

And the clinical stakes are real: bad pressure distribution during sleep causes chronic pain, pressure ulcers, and spinal degeneration.

A surface that solves this has immediate medical value.

But the bed was always a starting point. It is the first surface where non-living matter learns to understand a living body. What runs underneath it is the actual product.

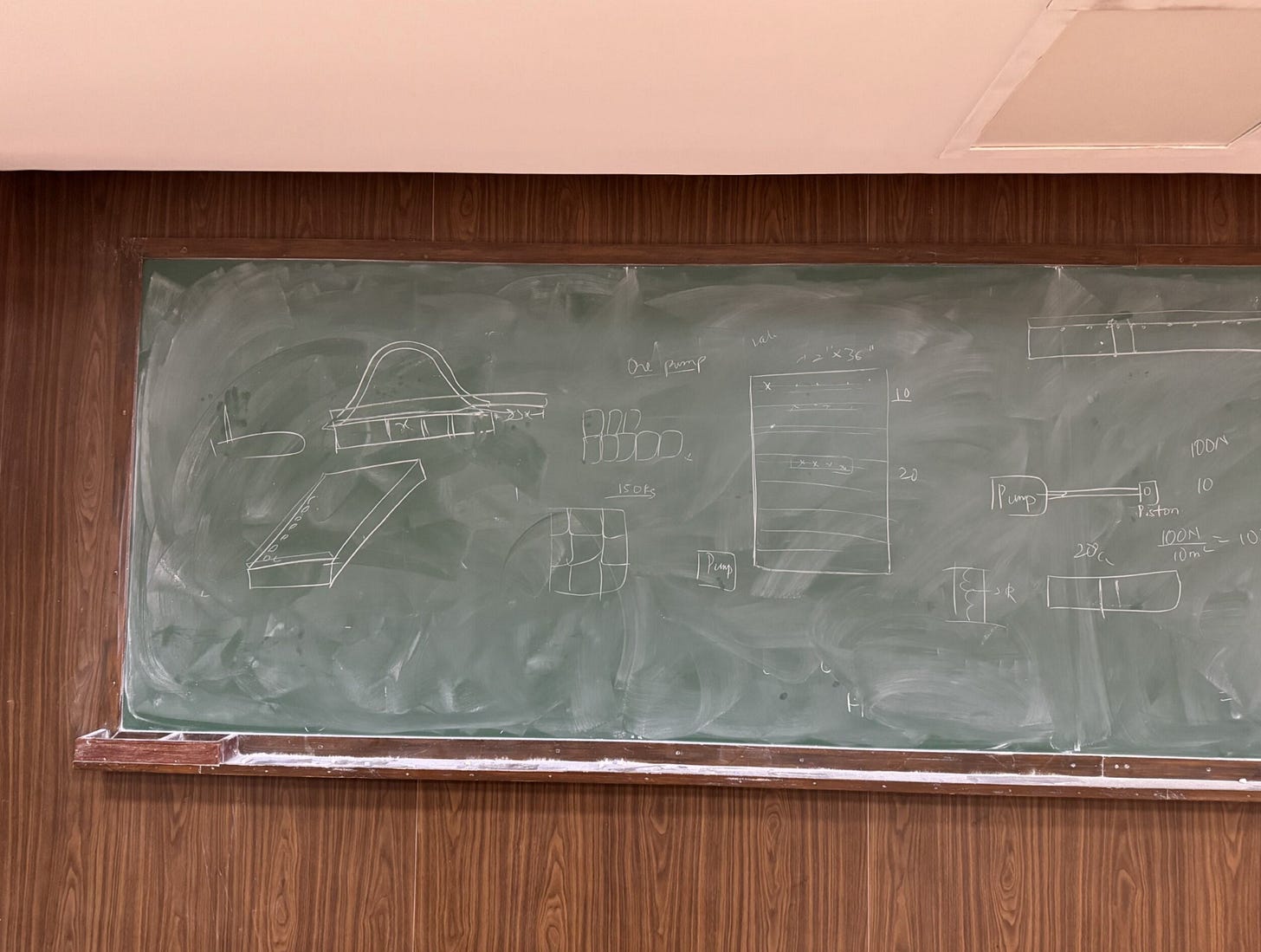

Building the body

Intelligence needs a body. Building that body is not easy. India has no deep mechanical engineering talent pipeline.

Not because the engineers do not exist, but because the good ones all moved to computer science or emigrated.

Teja tried to find experienced mechanical engineers to build the bed’s physical architecture. What he found were people who knew roughly as much as he did.

“They were all young and fresh. They’re at the same level as me in mechanical engineering.”

The first prototype looked promising on paper. Then they tested it under load, and the frame buckled. The force vectors were wrong — the team had not accounted for the dynamic redistribution of human weight as the actuators moved. A plate cracked under pressure. The design was scrapped.

“The first one was a disaster. The force vectors were completely wrong. When you put a human body on it and the actuators move, the weight redistributes dynamically. We hadn’t accounted for that. The plate just cracked.”

The second iteration solved the structural problem but created a new one: noise. Fifty motors generate sound. The bed was supposed to adjust while you sleep. If the adjustment wakes you, the product fails. They spent months on dampening, vibration isolation, and motor selection.

“If the bed wakes you up while adjusting, the entire product fails. That is the constraint. Fifty motors, and the user cannot feel any of them move.”

The electronics were another battle. The initial PCB design from a contracted team cost Rs 3.5 lakhs per bed, making the product economically impossible.

Teja taught himself enough PCB design to redo it from scratch. He brought the electronics cost down to Rs 22,000 per bed. An 11x reduction.

“The contracted team wanted Rs 3.5 lakhs per bed just for electronics. That makes the product impossible. So I taught myself PCB design. I had no choice. When you can’t find the people, you become the people.”

The frame is aerospace-grade aluminium — the same alloy used in aircraft. Not because Teja wanted premium materials, but because it was the only thing that could handle the load requirements at a survivable weight. The first frame weighed over 200 kilograms. They engineered it down, but it took iterations.

Four versions. Over two years. Each one is a complete redesign, not a refinement. And through all of it, Teja was simultaneously building the AI models.

The hardware and the software had to co-evolve — the models needed specific sensor densities, specific actuator resolutions, specific latency profiles. You cannot build one without the other.

“This is difficult for a pure software company to replicate — the models are co-designed with the hardware.”

The filter we could invert

This bed has to know where the body is.

It has to locate the head, the shoulders, the hips, the knees, and the heels. In real time. Without a camera. Without anything worn on the body. Without asking.

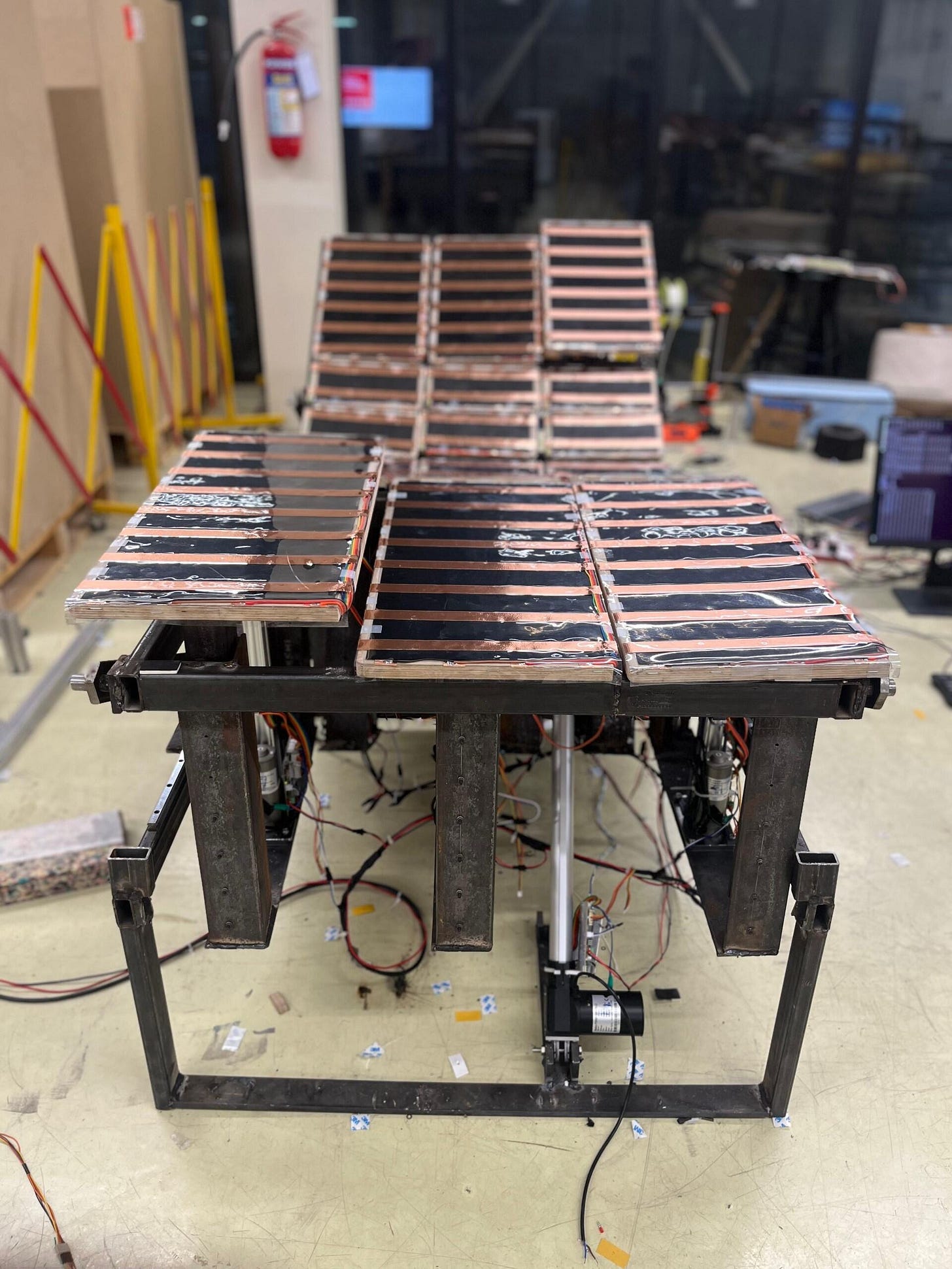

Everything else in the product is downstream of that answer. The forty-two actuated columns, the posture model, the nightly adjustments — none of it works if the bed does not first know what is lying on top of it.

“The hard engineering problem here is not actuation. It is sensing. And sensing a soft body through a soft mattress.”

Water senses the body with a full-bed pressure grid — a continuous piezoresistive film that changes resistance under load. The cell pitch is about 25 mm, which gives a spatial Nyquist limit of about 50 mm. Enough to resolve a shoulder from a hip. Not enough to resolve individual fingers. Anything the mattress blurs beyond 50 mm is lost permanently. The entire mattress design then comes down to one choice that sounds small and is not: where inside the stack do you put the grid.

Near the top, close to the body, the readings are sharp. Every contour shows up as a crisp pressure feature. But that position destroys the product. Piezoresistive film at the surface wears out in weeks under nightly shear. And a sleeper can feel the film through the top layer. Both failures are disqualifying in a premium bed.

At the bottom, under everything, the sensor lasts indefinitely and the bed still feels like a bed. But the signal does not.

“By the time the body’s pressure has travelled through every layer of foam and latex, the shoulder and the hip no longer look like a shoulder and a hip. They look like two warm blobs.”

That smudged signal is the real problem. It is not a sensor problem — the film itself is fine. It is a problem of what the mattress does to the signal on its way down. Water placed the sensor neither at the top nor at the bottom. It sits partway through the stack, above the structural layers — rebonded foam and birch ply — and below the comfort layers — silk, latex, super soft foam. Reading a signal that has only passed through three layers rather than the entire mattress.

Every decision in the mattress had to satisfy three constraints at the same time.

The first is comfort. A premium continuous sleeping surface. The design target is that pressure variation across body regions stays below the Weber threshold — the just-noticeable difference for cutaneous pressure — at every zone, every posture, at the same time. The team set this at ΔP/P ≤ 0.05, below the lowest published cutaneous JND of 0.07. An aggressive target, not a conservative one.

The second is a deconvolvable signal. Whatever pattern reaches the grid has to retain enough spatial information to recover body landmarks within a few centimetres.

The third is the discrete-column geometry. Water’s mattress is forty-two independent vertical columns, each roughly 260 × 260 mm, each with its own actuator. Below the ply cap, air gaps of about five millimetres separate the columns. Stress cannot jump air. Every load on a column is carried by that column alone.

These three do not agree. Comfort wants soft, continuous, conformal. A clean signal wants stiff, local, close to the body. Discrete columns force load concentration that neither of the first two would tolerate alone — a person sitting on the edge puts their full weight on one or two columns, a four- or five-times load swing with no relief path.

The mattress Water ships is the one point where all three hold at once. No earlier version of it was.

“How do you balance both of them? That’s literally the problem.”

Before any of the math, Teja draws it.

Press a single point on the top of the mattress. The force does not travel straight down. It spreads. In a soft layer, the further it gets from the point of contact, the wider the circle it paints.

“For every point you press, it’s a circle that comes down.”

Each layer of the stack is its own circle — the top layer one shape, the middle another, the bottom another. Now imagine a human lying on the surface. A human is not a point. A human is a large number of points, and every point paints its own circles, and all the circles overlap.

The sensor sees all of this superimposed. The shoulder and the hip each send their own expanding rings through each layer, and by the time those patterns reach the grid, they have already interfered with each other, and the shape of each original contact point has been smeared.

To recover the body from that smudged pattern, the software has to run each of the layer’s distortion functions in reverse. If the top layer’s is f, the middle’s is g, the bottom’s is h, then the software applies f⁻¹, g⁻¹, h⁻¹ in turn to get back to what the body was actually doing.

“You have to write inverse of all of these functions, to be able to again predict back — in what posture this person is sleeping.”

Formally, the measured field y(x, t) is the two-dimensional convolution of the body’s contact field with the stack’s combined impulse response, plus sensor noise. In the Fourier domain the convolution becomes a product, and a regularised Wiener inverse recovers the estimator the team actually uses. The inverse is well-posed only where the stack has not attenuated that spatial frequency below the noise floor. Every frequency the stack kills is a frequency that cannot be recovered. The entire mattress design is an exercise in making sure the frequencies the body uses to write its posture — the ones that separate a shoulder from a hip — are not among the ones the stack throws away.

If every mattress behaved the same way, the blur would be a fixed transformation and a single inverse would recover posture on any bed. Mattresses do not behave the same way. Memory foam softens at body temperature and delays pressure in time. Latex spreads it out in space with high fidelity. Pocket coils scatter it through discrete, nonlinear spring paths that depend on which coil happens to be touched. Each material has its own impulse response, and those responses change with temperature, load, and age.

A single fixed deconvolution cannot recover posture across mattress types. The only way through is to fix the mattress — build one stack whose blur kernel you understand well enough to invert — and then build the model against that specific stack.

To characterise a candidate composition, the team ran a controlled probe. Rigid objects of known geometry and known weight — blocks, cylinders, L-shapes — were placed on a single-column test rig with a 24 × 16 pressure grid at 10 mm pitch. Beside the mattress, the same object sat on a bare reference array: same cell pitch, same electronics, same weight, same position. The bare reading is ground truth. The reading through the mattress is the convolution of that ground truth with the stack’s point-spread function, plus sensor noise.

They ran this across fourteen compositions, varying material, thickness in one-inch increments, and layer order. Nine positions across the grid, multiple loads. For each composition a regularised least-squares fit recovered the point-spread function, and each was then scored on three axes: how narrow the recovered PSF was, how much noise the grid saw, and how close the through-mattress pressure field stayed to the Weber threshold under a body-shaped load.

The three axes trade against each other by construction. Pushing distortion down raises stiffness and pulls comfort down. Pushing signal-to-noise up favours thinner, denser foam and pulls comfort down further. Pushing comfort up with softer or thicker layers widens the PSF. There is no global winner — only a Pareto front. The composition Water ships sits at the intersection of the comfort threshold and the minimum-distortion corner of that front.

It is tempting to conclude that the stiffest composition wins, because lateral spreading is the enemy. It does not.

Put a metal plate above the sensor. Apply a point force anywhere on its surface. The plate is rigid — it cannot deform locally, only translate or tilt as a whole. The force distributes itself nearly uniformly across the entire bearing area underneath. Every sensor cell reads roughly the same value regardless of where the load was. You have not measured where the force is. You have only measured that a force exists.

Soft compositions fail the opposite way. The layer deforms too readily. The PSF widens into a broad halo. Neighbouring features overlap into one warm region.

“If you put full latex, it curves. Sensor is gone. Impossible. The machine won’t work.”

Plot spatial resolution against effective layer stiffness and you get a curve that rises from the soft end, peaks at a particular stiffness, and falls toward the rigid end. It is single-peaked, not monotonic. Below the peak, the layer spreads the load laterally. Above the peak, it refuses to deform locally. Water’s layer of latex above super-soft foam sits near the peak.

PSF smearsCAMA

peak resolutionMetal plate

no localisation

A mattress layer’s loss factor — energy dissipated per cycle divided by energy stored — tells you how long the material remembers the last load. Natural latex sits around η ≈ 0.15 with ~85% energy return. HR polyurethane around 0.40, ~60%. Memory foam around 0.65, ~35%.

In a comfort-only world, this is a feel parameter. In a deconvolution world it is temporal coupling. A high-loss material carries residual strain from one actuator frame into the next, and the sensor reads a low-pass smear of past pressure fields instead of the current one. For tested memory foam grades, the Deborah number — retardation time over actuator cycle — sits between 3 and 8 against a one-second cycle. The material cannot be reshaped between frames. Every sensor frame is contaminated by the last.

Super-soft foam in Water’s comfort layer has a Deborah number near one. It settles within a single cycle. Memory foam also fails on temperature alone — its modulus shifts by a factor of three to five between room temperature and skin temperature, a variation the model cannot compensate for without per-user thermal calibration. That is why there is no memory foam anywhere above Water’s sensor. Not because it feels bad. Because it destroys the signal.

Other candidates failed for their own reasons. A six-inch latex monolith — one slab per column, do everything — has an axial resonance with an eighty-kilogram load near 3.4 Hz, inside the 2–5 Hz band of body sway. The three-layer stack spreads the same energy across several damped modes with no dominant peak. Pocket coils buckled under actuator eccentricity within a few hundred cycles once the lateral bracing of neighbouring coils was taken away by the discrete-column geometry. HD polyurethane at the base, without rebonded above it, compressed beyond 20% permanent set inside a two-year-equivalent accelerated-aging schedule.

“On a conventional mattress, sleepers drift across the surface and wear redistributes. On a discrete column, the hip column takes the hip load every night and never gets relief.”

The rebonded foam’s mechanically interlocked structure stayed below 10% over the same period.

Water did not pick a mattress first and then try to deconvolve through it. The team ran the loop in the other direction. Build a prototype stack. Measure the point-spread function against the reference array. Train the landmark model against the resulting sensor field. Measure where the model failed. Change the stack to make the next round of deconvolution easier. Return to step one.

“Countless sleepless nights, across our seven-member team, to invent this composition.”

The mattress that ships is the fixed point of that loop. Every layer earns its position in the stack by surviving an experiment against the layers around it. The order — latex, super-soft foam, pressure grid, rebonded foam, birch ply, actuator — is the only permutation that placed every material where its own properties did the work.

A conventional mattress distributes load. Water’s mattress routes it — down an independent path per column, cushioned above, sensed in the middle, structurally supported below.

“The mattress is not the product. The product is a bed that knows where the body is, and a mechanism that uses that knowledge to hold the spine where it should be, breath by breath, all night. The mattress is the filter we chose because, of everything we tried, it was the one we could invert.”

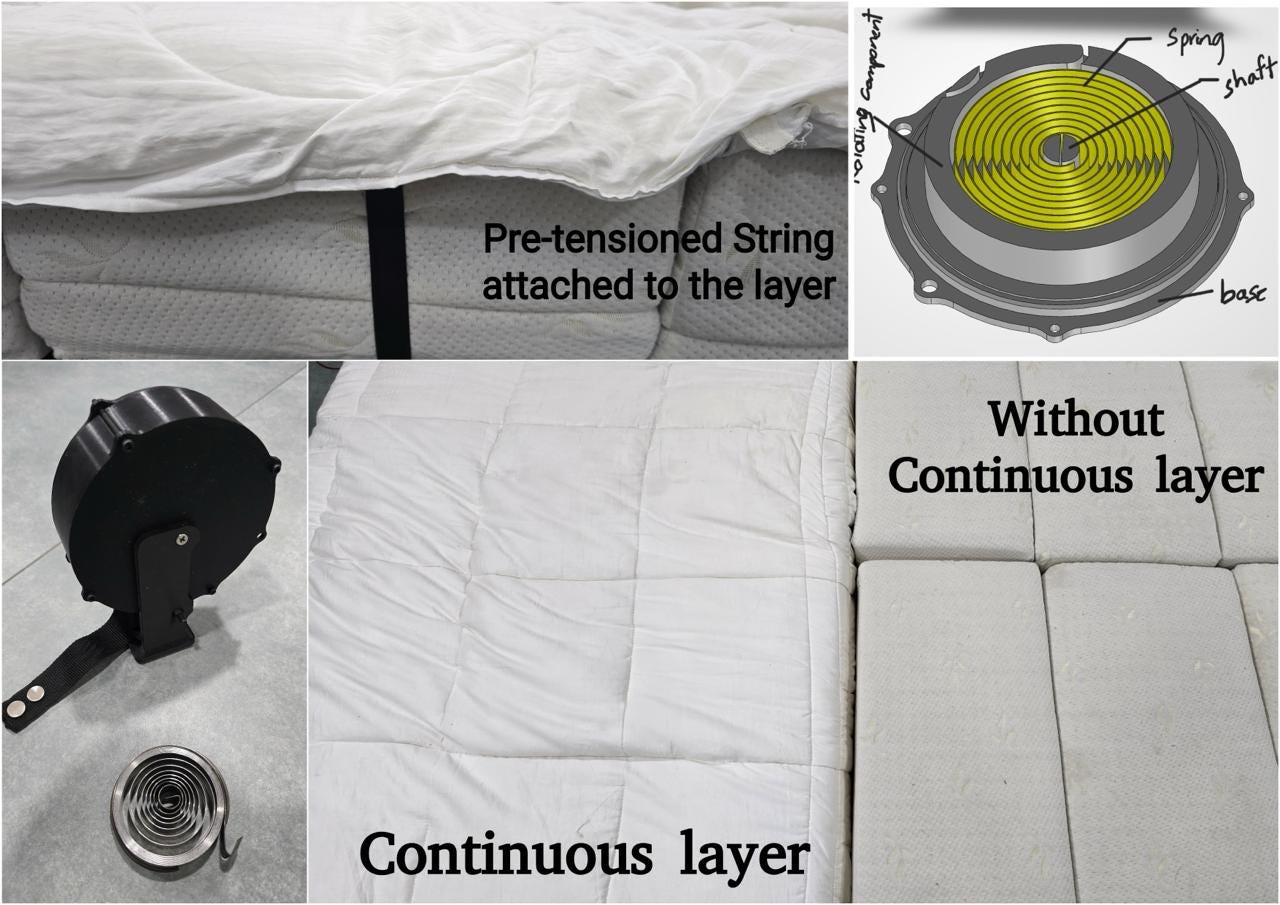

From discrete to continuous

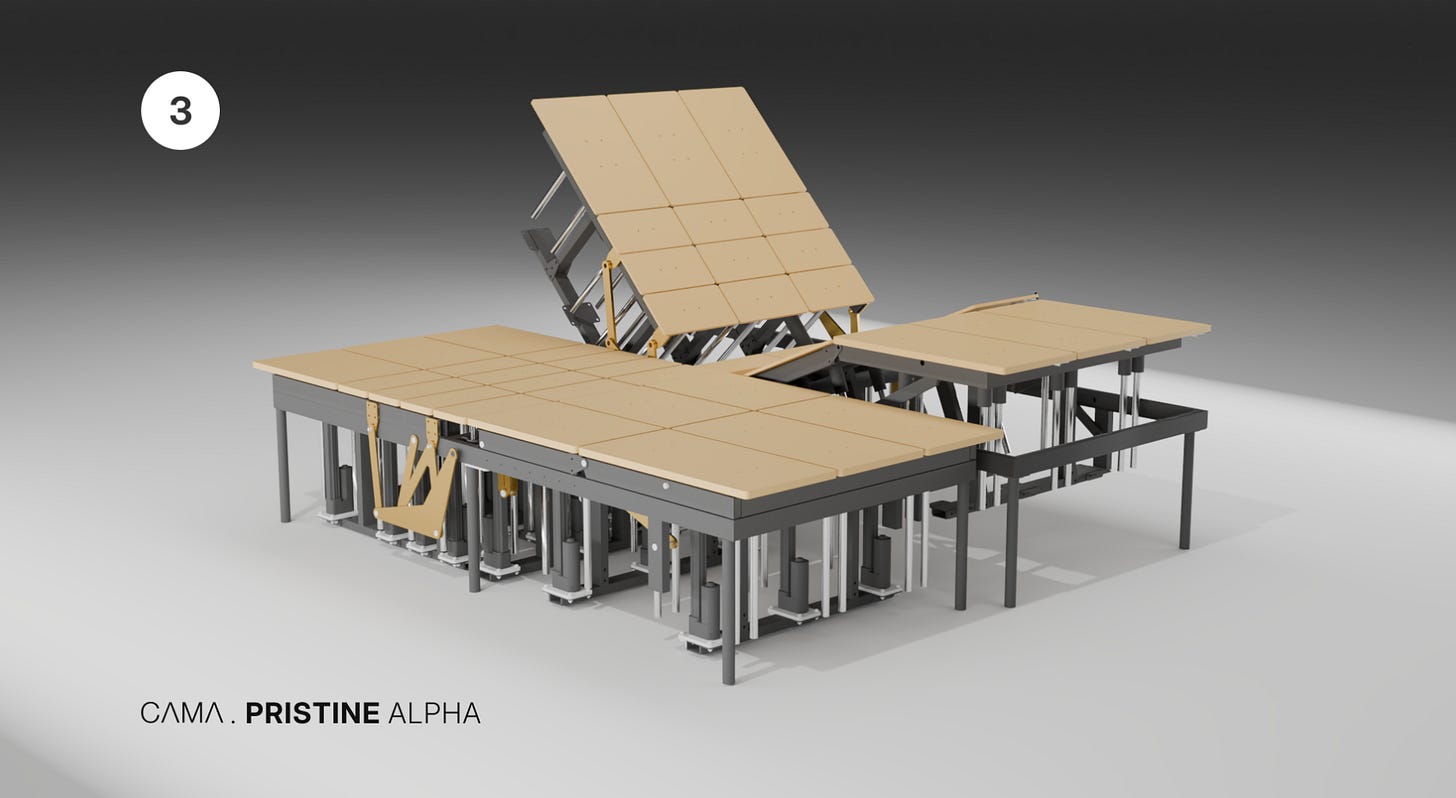

The bed’s mechanism is 42 independently actuated blocks.

Each is one foot wide with 250 mm of vertical travel. Together, they produce a height map across the bed surface. But what the mechanism outputs is a step function — a staircase. And a staircase and a sleeping body are geometrically incompatible.

The layer between mechanism and human has to transform that staircase into a smooth, continuous surface — passively, without power, thousands of times a night. And if the transformation fails — if an elbow finds the gap between blocks, if a shoulder detects the step — the product has failed regardless of how good the intelligence underneath is.

There were five constraints to hold simultaneously. The surface had to conform to 250 mm of travel per zone. It had to achieve zero edge detection by the sleeper. It had to survive thousands of transitions per night without degrading. It had to fully seal the electronics from liquid. And it had to feel like premium bedding. No compromise on any of them.

The obvious first move was a fabric that stretches. Give it enough elongation and it follows any block height. This is where most attempts start, and where most of them break.

Stretch fabrics — spandex, Lycra, TPU composites — obey Hooke’s law at the fabric level. As a block rises, strain increases, and so does the force resisting the actuator. The layer stops being a passive surface and becomes a mechanical participant, distorting the pressure profile the sleeper feels.

The team moved to geometry. Three approaches were serious candidates.

Miura-ori — a rigid-foldable origami tessellation. The problem: it is kinematically coupled. When one section rises, the adjacent geometry must follow. Each of the 42 blocks is independent. The crease lines also create a faceted texture perceptible to the skin.

Auxetic fabrics — reentrant hexagonal geometry that produces a negative Poisson’s ratio. The geometry works on paper. The surface feel does not. The open hexagonal lattice creates pressure concentration points at every hinge. And the yarn-on-yarn friction at each node produces acoustic noise during adjustments.

Kirigami — strategic cuts that reduce the sheet’s effective stiffness. Under thousands of micro-adjustment cycles, crack propagation from cut tips is unavoidable. The snap-through between folded and deployed states generates an audible click.

Every geometric approach hit the same wall. Methods that produce displacement through structured folding create structures. Structures have edges, nodes, and hinge points. A sleeping body detects all of them.

The breakthrough came from reframing the problem.

Trying to make the fabric itself do the conformance work was wrong. The fabric should not be a mechanism. It should be a passive surface that gets fed slack as needed and pulled taut when the need is gone.

Springs connected to the fabric perimeter could do that. But which kind? Linear springs have a restoring force proportional to extension — when multiple blocks are at different heights, tension across the fabric becomes non-uniform. The sleeper feels it as an inconsistency.

What they needed was a spring where the force is independent of extension. A horizontal line on the force-displacement curve. Constant force regardless of how far the spring has extended. Uniform tension whether one block is raised or twenty.

Constant-force springs are pre-stressed coils of spring steel. The geometry of uncoiling offsets the material’s elastic restoring tendency, producing a flat force output across the working range.

The remaining question was what force constant to set. Model the fabric spanning a block gap as a membrane under tension, loaded by body weight. At low tension, the fabric sags between blocks — fingers, elbows, and hip bones sink into the gaps. At high tension, the fabric becomes a drumhead — it bridges across blocks instead of draping over them.

The lower bound comes from anatomy. The upper bound comes from haptics. Those two constraints, applied to the block geometry and a 95th-percentile body weight distribution, intersect at one number: 10 N.

That single number — 10 N — determines every physical dimension of the spring: strip width, strip thickness, coil diameter, and number of turns. Every dimension is downstream of one force constant and one slack budget.

The fabric carries a mulberry silk filling between the top sheet and the mechanism. Too much filling and the body pushes through it, sinking past the smoothing effect to feel the blocks underneath. The pressure sensor readings also degrade — too much compliant material acts as a low-pass filter, blurring the signal. Too little filling and the step discontinuity between adjacent zones becomes tactile. Fill density had to land in a narrow window.

During cyclic testing, the team noticed something unexpected. When all blocks return to neutral and body weight is removed, the constant-force springs retract all slack simultaneously from every edge. The fabric reaches its minimum-energy state: flat, taut, wrinkle-free. The surface resets itself. Every time. A direct mechanical consequence of the spring geometry, not a designed feature. That became a patent.

Sleeping on water

The bed has no opinion about what your body should look like. It becomes whatever your body needs, one millimetre at a time, timed to your breathing.

The system’s sensors track your respiratory pattern and actuate only during exhalation, when the body is naturally more relaxed. You do not feel the bed adjusting. You wake up without knowing anything happened.

“The bed actuates during exhalation because your body is naturally more relaxed at that point. If we move during inhalation, you feel it. During exhalation, you don’t. That one insight took us months to figure out.”

But the clinical applications are where the bed starts to matter beyond comfort.

Ninety percent of couples, when they are intimate, default to the missionary position. If either partner has a stomach, this becomes physically difficult. Doctors recommend placing a pillow under the hips.

As Teja points out, nobody actually does that.

“Doctor says, ‘please use a pillow behind the bum of your partner’. Nobody does that. Sexual satisfaction scores, when we ran the study were 40% for women and 70% for men.”

The bed can do it silently — adjust the pelvic zone by a few degrees without anyone pressing a button.

Menstruation cramps: the bed can adjust to relieve abdominal pressure.

Pregnancy support: the surface reconfigures as the body changes week by week.

Breastfeeding at 3 am: the bed adjusts to a nursing-friendly recline without the mother fumbling for a switch in the dark.

There is the problem of sleeping with your hand under your partner. You lie on your side, your arm extended under their neck or head. Within twenty minutes, the arm goes numb.

The bed can detect the compressed limb through pressure mapping and raise the zone under the partner’s head by a few millimetres — just enough to relieve the pressure on the trapped arm without waking either person.

These are not features listed on a spec sheet. They are things that happen when a surface understands human anatomy in real time.

At CES 2026 in Las Vegas, the team took 173 meetings in four days. According to Teja, around 85% of the people who tried the bed expressed interest in buying one.

Steven Bathiche — who led the Applied Sciences group at Microsoft and was behind the original Surface — spent over an hour at the booth.

“At CES, people would lie down and within thirty seconds they would say — I need this. Engineers, grandmothers, tourists. It did not matter where they came from. The body understands what the body needs.”

Visitors who happened upon the booth — engineers, families, industry veterans — tried the bed and wanted to know how to buy one.

“If I sell the bed to you, I will feel personally responsible for every single bed. If even one customer's experience is not that 'wow' feeling — then the product is not ready.”

Safety

The bed runs on 24V DC — laptop-grade power, not mains voltage. A waterproof layer fully seals the electronics. Fuses trip in 1.4 milliseconds.

Mechanical liquid drainage ensures that even in the worst case — a spilled drink, a child’s accident — the fluid routes away from the electronics through gravity alone. The frame is space-grade aluminium, which is non-flammable.

The bed has absolute limits; it will never exceed angle, pressure, or speed to the point where it becomes risky for humans. These are hard-coded, not learned.

Teja does not trust any AI model enough to let it push a human body beyond clinical safety thresholds.

The chair

The chair came from the same technology. But it proves something important about the 70B-parameter model family.

Every model in the Water Family — Posture Classification, Keypoint Detection, Pressure Relief, the RL engine — transports directly from the bed to the chair at near-zero marginal R&D cost.

The architecture transfers across surfaces — but not every model ports cleanly. Pressure ID for the chair is, in Teja’s words, ‘a beast in itself.’ It is still in research.

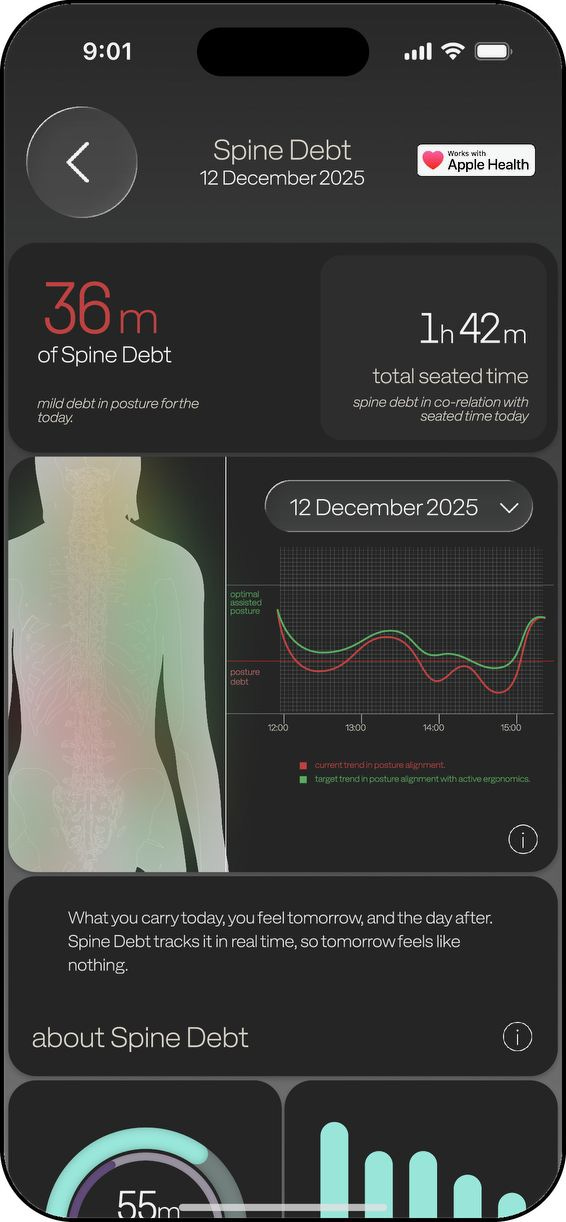

The chair also introduces a new concept: Spine Debt.

The same way technical debt accumulates in software — bad decisions that compound over time — Spine Debt is a running tally of what bad posture costs your body.

The app tracks it in real time. It shows you when you are accumulating debt and when you are paying it off.

“Technical debt in software — bad decisions compound over time. Spine Debt is the same thing for your body. Every hour of bad posture is debt. The app shows you when you’re accumulating it and when you’re paying it off.”

“Current wearable devices just track what’s happening making them late-20th century tech. The new world shouldn’t just tell what’s happening but also just take action too.”

The chair is where Contextual Intelligence gets built first.

The chair has a richer activity vocabulary than the bed — coding, video calls, focused reading, casual browsing, and standing breaks.

A software agent runs on your laptop, reads which application is in the foreground, and feeds that signal to the chair. If you switch from email to a focused coding session, the chair will adjust posture support accordingly.

A monitor stand syncs its height with the chair position. The chair learns your daily rhythm, tracks your productivity patterns, and identifies which postures correlate with your best work.

Once validated on the chair, Contextual Intelligence ports back to the bed and extends to every future surface.

“The chair is much easier to do contextual intelligence on than the bed. The chair has a richer vocabulary — coding, video calls, reading, browsing, standing breaks. We're doing it on the chair first and then we'll put it into the bed when it's ready.”

The vision is a single-user graph that follows you from surface to surface. If the chair detects a high-tension workday, the bed could eventually adjust your recovery parameters that night.

Each additional surface would increase the data density and improve the intelligence for every other surface.

A market hiding in plain sight

Before we talk about the global market, let’s do an India-specific thought experiment.

The passenger car industry in India — just eight companies — controls approximately Rs 12 lakh crore in market capitalisation.

Annual sales: roughly 42 lakh cars. Almost half of this market is made up of SUV sales. And the average price per car sold in India has risen to approximately Rs 10-12 lakhs, compared to Rs 7–8 lakhs just five to six years ago.

Is it too wild to imagine that the customer who spends this much on a car would also consider investing in beds and chairs that actively support their spine 24x7?

Teja is betting that they will — they just do not know an offering exists yet.

Now consider what those companies actually sell. A car is a set of surfaces that interact with the human body — a seat, a steering wheel, a dashboard — mounted on an engine and four wheels. The average Indian spends maybe an hour or two a day in a car.

The same Indian spends eight hours a day on a bed and ten hours in a chair.

There is no equivalent industry for the surfaces where humans actually spend their lives. The mattress market is fragmented, unbranded, and sells passive foam.

Branded mattress companies have more MBAs than AI researchers. Office chairs have not changed in decades.

The same holds outside India, with one difference. Globally, the mattress industry is branded — but dumb.

It was worth roughly $57 billion in 2025. Tempur Sealy, Serta Simmons, Purple, Sleep Number — the biggest names in the world — sell foam and springs with marginal technology wrappers.

Meanwhile, the willingness to pay to understand these hours already exists. Oura, Whoop, Apple Watch, Eight Sleep — billions of dollars flow into devices that measure heart rate, respiratory rate, and sleep.

The wearable is an observer. It shows you a chart in the morning. A bed with pressure sensors reads the same heart rate and respiratory rate passively, while the sleeper is on it — and then acts on what it reads. If a movement triggers a heart rate spike, the bed learns to move differently next time.

This is what pressure plus actuation enables, and a wrist-worn device cannot: sensing and responding to the body in the same moment.

The capital going elsewhere makes the gap more visible. In 2025, billions in venture funding poured into humanoid robotics. Figure AI crossed $1 billion in a Series C at a $39 billion valuation. Apptronik raised over $1 billion. Physical Intelligence raised $600 million. Agility Robotics raised $400 million.

The bet there is that robots will walk around the world doing jobs humans do today. Almost nothing went into the non-living things humans already sit on, lie on, and spend their lives inside. Every home on earth has a bed. Every office on earth has a chair. Both are uninstrumented.

“Tell me one surface that’s intelligent about the human body. There isn’t one. The most advanced office chair in the world has zero sensors.”

“A car can be shown off — and that is important in some countries. But you spend more time in your bed and your chair than in a car. When the shift in thought happens, people will start putting money into their sleep and their sitting posture.”

The team

Teja is the system architect, electronics designer, and the math-heavy ML lead. He is the kind of founder who builds it himself and hires people who can keep up.

His co-founder, Mourya Desu, handles supply chain and operations. That description undersells him.

Mourya’s family has run a furniture distribution business in Andhra Pradesh for thirty-five years — a network that reaches villages of 100,000+ homes through a handful of stores. He joined his father’s business full-time in 2021 and spent four years inside that supply chain before coming to Water.

That is specific knowledge that an IIT/IIM degree does not buy.

“In China, for one rupee they’ll give you an actuator. For ten rupees, they’ll give you an actuator. For a thousand, they’ll give you an actuator. You have to go and check their factories to know which one will give you what you deserve.”

Mourya spent a month in China in 2024, finding the vendors who would ship motors and components at prices Water’s bed could absorb.

The bevel-cutting machine for cutting aluminium came from a vendor he tracked down in Ahmedabad, priced noticeably lower than in places like Coimbatore.

The difference is thirty-five years of family relationships in a supply chain that most engineers have never walked into.

“Most talents who have grown up in the comfort of software startups will not bend their back and go talk to a vendor in a factory somewhere in the corner of China or India. Mourya will. That is the reason this company has a working R&D today at a fraction of the cost.”

The team is a math-heavy group of engineers, and small enough that everyone is in the room for every decision.

“The beauty with such people is I don't need to complete my statement. I say seven words and they already know the paragraph.”

Sahithi and Teja had first crossed paths at Microsoft, where he was a product manager, and she was a UX researcher on a project of his.

Later, when Teja moved to Multiplier and was hiring, Sahithi joined him there — she ran Multiplier’s local labour-law compliance engine and reported to Teja for three years.

Building Great Physical AI Consumer Products

The humanoid bet may pay off. But Jeff Bezos has a useful question: instead of asking what will change in the next ten years, ask what won’t change. You can build a durable business around the things that stay the same.

Here is what will not change: humans will sleep. They will sit.

“They will need their spines supported, their pressure relieved, their bodies understood. No technology trend will alter the fact that people spend eight hours a day in bed and ten hours in a chair. The surfaces are permanent. What changes is whether those surfaces are intelligent.”

“Build surfaces that sense and respond to the human body. Use that to build the deepest body intelligence platform on earth. Prove dead matter can become alive and caring. Take that proof towards programmable matter.”

Water Robotics is building Physical AI for products people already use. Not humanoid robots that need to learn to walk. Surfaces that already exist, made intelligent. The bed, the chair, the car seat, the sofa — objects that have been inert for centuries, given the ability to sense and respond to the human body.

Capital Raise: Water Robotics is raising a seed round. Contact us at banjan@tal64.com if interested in participating.

Methodology: This story is based on extensive interviews with Teja Vinukollu, Founder and CEO of Water Robotics, conducted over multiple sessions in Hyderabad. It also draws on technical documentation, research articles by the team, and the author’s direct observation of the products and team. This is NOT a paid article.